- Topic1/3

46 Popularity

4k Popularity

7k Popularity

3k Popularity

17k Popularity

- Pin

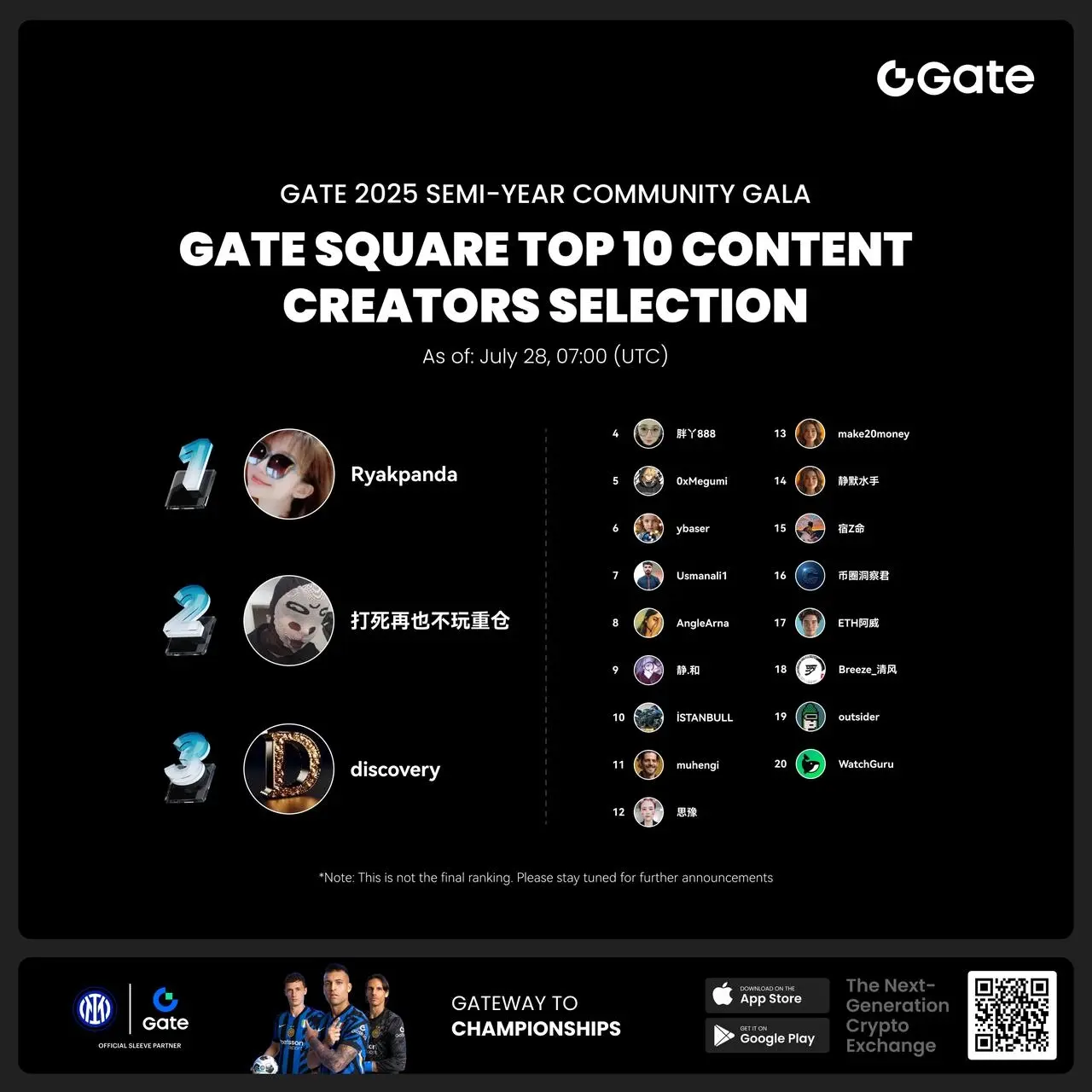

- #Gate 2025 Semi-Year Community Gala# voting is in progress! 🔥

Gate Square TOP 40 Creator Leaderboard is out

🙌 Vote to support your favorite creators: www.gate.com/activities/community-vote

Earn Votes by completing daily [Square] tasks. 30 delivered Votes = 1 lucky draw chance!

🎁 Win prizes like iPhone 16 Pro Max, Golden Bull Sculpture, Futures Voucher, and hot tokens.

The more you support, the higher your chances!

Vote to support creators now and win big!

https://www.gate.com/announcements/article/45974

- 🎉 Hey Gate Square friends! Non-stop perks and endless excitement—our hottest posting reward events are ongoing now! The more you post, the more you win. Don’t miss your exclusive goodies! 🚀

1️⃣ #ETH Hits 4800# | Market Analysis & Prediction: Boldly share your ETH predictions to showcase your insights! 10 lucky users will split a 0.1 ETH prize!

Details 👉 https://www.gate.com/post/status/12322612

2️⃣ #Creator Campaign Phase 2# |ZKWASM Topic: Share original content about ZKWASM or its trading activity on X or Gate Square to win a share of 4,000 ZKWASM!

Details 👉 https://www.gate.com/post/st

OpenLedger launches an AI model incentive chain based on OP Stack + EigenDA to build a composable agent economy.

OpenLedger Depth Research Report: Building a Data-Driven, Model-Composable Agent Economy on the Foundation of OP Stack + EigenDA

1. Introduction | The Model Layer Leap of Crypto AI

Data, models, and computing power are the three core elements of AI infrastructure, analogous to fuel (data), engine (models), and energy (computing power), all of which are indispensable. Similar to the evolution path of traditional AI industry infrastructure, the Crypto AI field has also gone through similar stages. At the beginning of 2024, the market was once dominated by decentralized GPU projects ( and certain platforms, emphasizing a rough growth logic of "competing in computing power." However, as we enter 2025, the industry's focus gradually shifts to the model and data layer, marking the transition of Crypto AI from competition for underlying resources to a more sustainable and application-value-driven mid-level construction.

) General Large Model (LLM) vs Specialized Model (SLM)

Traditional large language models (LLMs) rely heavily on large-scale datasets and complex distributed architectures, with parameter scales ranging from 70B to 500B, and the cost of training once can often reach several million dollars. In contrast, SLM (Specialized Language Model) is a lightweight fine-tuning paradigm that allows for the reuse of foundational models, typically based on open-source models like LLaMA, Mistral, and DeepSeek, and combines a small amount of high-quality specialized data and technologies like LoRA to quickly build expert models with specific domain knowledge, significantly reducing training costs and technical barriers.

It is worth noting that SLM will not be integrated into the LLM weights, but will collaborate with LLM through methods such as Agent architecture invocation, dynamic routing via plugin systems, hot-swappable LoRA modules, and RAG (Retrieval-Augmented Generation). This architecture retains the broad coverage capabilities of LLM while enhancing specialized performance through fine-tuning modules, resulting in a highly flexible combinatorial intelligent system.

The value and boundaries of Crypto AI at the model layer

Crypto AI projects are essentially difficult to directly enhance the core capabilities of large language models (LLM), and the main reason lies in

However, on top of the open-source foundational models, Crypto AI projects can still achieve value extension by fine-tuning specialized language models (SLM) and integrating the verifiability and incentive mechanisms of Web3. As the "peripheral interface layer" of the AI industry chain, this is reflected in two core directions:

AI Model Type Classification and Blockchain Applicability Analysis

It can be seen that the feasible landing points of model-type Crypto AI projects mainly focus on the lightweight fine-tuning of small SLMs, on-chain data access and verification of RAG architecture, as well as local deployment and incentives of Edge models. Combined with the verifiability of blockchain and token mechanisms, Crypto can provide unique value for these medium to low resource model scenarios, forming differentiated value for the AI "interface layer".

The blockchain AI chain based on data and models can provide clear and immutable on-chain records of the contribution sources of each piece of data and model, significantly enhancing the credibility of the data and the traceability of model training. At the same time, through the smart contract mechanism, rewards are automatically distributed when data or models are invoked, transforming AI behavior into measurable and tradable tokenized value, thus building a sustainable incentive system. In addition, community users can also evaluate model performance through token voting, participate in rule-making and iteration, and improve the decentralized governance structure.

![OpenLedger Depth Research Report: Building a Data-Driven, Model-Composable Agent Economy Based on OP Stack + EigenDA]###https://img-cdn.gateio.im/webp-social/moments-62b3fa1e810f4772aaba3d91c74c1aa6.webp(

2. Project Overview | The AI Chain Vision of OpenLedger

OpenLedger is one of the few blockchain AI projects on the market that focuses on data and model incentive mechanisms. It pioneers the concept of "Payable AI" with the aim of building a fair, transparent, and composable AI operating environment that incentivizes data contributors, model developers, and AI application builders to collaborate on the same platform and earn on-chain rewards based on their actual contributions.

OpenLedger provides a complete closed-loop chain from "data provision" to "model deployment" and then to "profit sharing call", with its core modules including:

Through the above modules, OpenLedger has built a data-driven, model-combinable "agent economy infrastructure" to promote the on-chainization of the AI value chain.

In the adoption of blockchain technology, OpenLedger uses OP Stack + EigenDA as the foundation to build a high-performance, low-cost, and verifiable data and contract execution environment for AI models.

Compared to NEAR, which is more focused on being a lower-level, general-purpose AI chain that emphasizes data sovereignty and the "AI Agents on BOS" architecture, OpenLedger is more focused on building an AI-specific chain aimed at data and model incentives. It aims to make the development and invocation of models on-chain achieve a traceable, composable, and sustainable value loop. It serves as the model incentive infrastructure in the Web3 world, combining certain platform-based model hosting, certain platform-based usage billing, and certain platform-based on-chain composable interfaces to promote the realization path of "models as assets."

![OpenLedger Depth Research Report: Building a Data-Driven, Model-Composable Agent Economy Based on OP Stack + EigenDA])https://img-cdn.gateio.im/webp-social/moments-19c2276fccc616ccf9260fb7e35c9c24.webp(

3. Core Components and Technical Architecture of OpenLedger

) 3.1 Model Factory, no-code model factory

ModelFactory is a large language model (LLM) fine-tuning platform under the OpenLedger ecosystem. Unlike traditional fine-tuning frameworks, ModelFactory provides a purely graphical interface operation, eliminating the need for command line tools or API integration. Users can fine-tune models based on datasets that have been authorized and reviewed on OpenLedger. It achieves an integrated workflow for data authorization, model training, and deployment, with core processes including:

The Model Factory system architecture consists of six major modules, encompassing identity authentication, data permissions, model fine-tuning, evaluation deployment, and RAG traceability, creating a secure, controllable, real-time interactive, and sustainable monetization integrated model service platform.

![OpenLedger Depth Research Report: Building a Data-Driven, Composable Model Agent Economy Based on OP Stack + EigenDA]###https://img-cdn.gateio.im/webp-social/moments-f23f47f09226573b1fcacebdcfb8c1f3.webp(

The following is a brief overview of the capabilities of large language models currently supported by ModelFactory:

Although OpenLedger's model combination does not include the latest high-performance MoE models or multimodal models, its strategy is not outdated; instead, it is a "practical-first" configuration based on the real constraints of on-chain deployment (inference costs, RAG adaptation, LoRA compatibility, EVM environment).

Model Factory, as a no-code toolchain, has built-in proof-of-contribution mechanisms for all models, ensuring the rights of data contributors and model developers. It has the advantages of low barriers to entry, monetization, and composability, compared to traditional model development tools:

![OpenLedger Depth Research Report: Building a Data-Driven, Model-Composable Agent Economy Based on OP Stack + EigenDA])https://img-cdn.gateio.im/webp-social/moments-909dc3f796ad6aa44a1c97a51ade4193.webp(

) 3.2 OpenLoRA, on-chain assetization of fine-tuned models

LoRA (Low-Rank Adaptation) is an efficient parameter tuning method that learns new tasks by inserting "low-rank matrices" into pre-trained large models without modifying the original model parameters, significantly reducing training costs and storage requirements. Traditional large language models (such as LLaMA, GPT-3) typically have billions or even hundreds of billions of parameters. To use them for specific tasks (such as legal Q&A, medical consultations), fine-tuning is required. The core strategy of LoRA is: "freeze the parameters of the original large model and only train the newly inserted parameter matrices." Its parameter efficiency, fast training, and flexible deployment make it the mainstream fine-tuning method most suitable for Web3 model deployment and compositional calls.

OpenLoRA is a lightweight inference framework built by OpenLedger, specifically designed for multi-model deployment and resource sharing. Its core objective is to address common issues in current AI model deployment, such as high costs, low reuse, and GPU resource wastage, promoting the implementation of "Payable AI."

OpenLoRA system architecture core components, based on modular design, covering key links such as model storage, inference execution, and request routing, achieving efficient and low-cost multi-model deployment and invocation capabilities: